🔆 About Me

I am an advanced algorithm engineer at the Tongyi Large Model Business Unit, Alibaba Token Hub, dedicated to deploying Qwen post-training into real-world scenarios. I received my Ph.D. from Zhejiang University in 2019, advised by Prof. Yaowu Chen, and my B.Eng. from Chu Kochen Honors College, Zhejiang University.

工作于通义大模型事业部,致力于通过Qwen后训练,在实际业务场景中落地应用、创造价值。2019 年博士毕业于浙江大学,本科毕业于浙江大学竺可桢学院。

🎓️ Research Interest

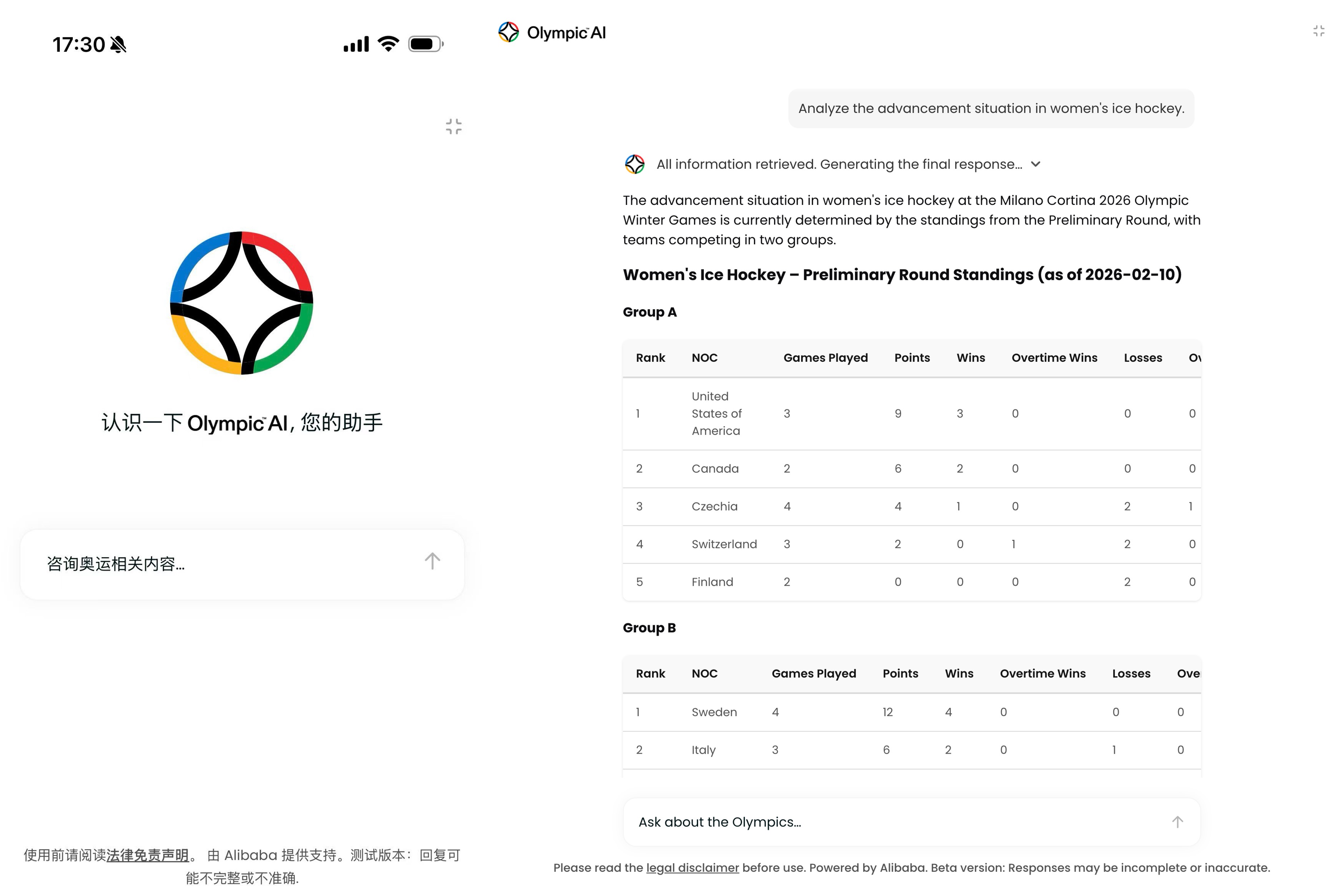

My research focuses on LLM, building key components of the post-training pipeline to deeply optimize Planning & Tool-Use capabilities at both model and system levels, reducing hallucination and accurately completing user tasks. I lead a team that has delivered domain-specific LLM solutions across verticals including international sports events and education.

当前从事大语言模型算法研究,致力于通过构建后训练过程中的关键环节:Data/Trajectory Curation、Training Strategy、Reasoning Scaffolding、Fine-grained Benchmarking,深度优化模型和链路的Planning & ToolUse能力,降低幻觉并精准完成用户任务。

我带领团队在奥运会国际赛事、国内教育等场景均有行业化落地。米兰冬奥基于阿里千问打造奥运官方大模型,并在奥运会期间上线olympics.com官网,服务全球用户。

🎖️ We are hiring! Looking for talented interns and full-time researchers in LLM research and applications. Learn more →

🔥 News

- Apr 2026 Interact-RAG accepted by ICLR'26 — Breaking black-box RAG paradigm, enabling LLM agents to actively manipulate retrieval.

- Dec 2025 Thinking Speed Control accepted by NeurIPS'25 Spotlight — First dynamic fast/slow thinking switch for reasoning models.

- May 2025 StruXGPT2 accepted by ACL'25 — Achieving 100% knowledge injection performance with only 5% training corpus.

- May 2025 ROPO accepted by ICML'25 — Noise-robust preference alignment without external models.

- Feb 2025 ROUTE accepted by ICLR'25 — Multi-task collaborative Text-to-SQL for open-source LLMs.

📜 Professional Services

Programme Committee: NeurIPS (2023–2025), ICLR (2023–2024), ICML (2023–2024), CVPR (2024), AAAI (2024), KDD (2023)

Journal Reviewer: IEEE TIP, TCSVT